Last weekend, Aisha was rushing to catch her flight. With one hand holding her coffee and the other dragging a suitcase, she simply said, “Hey travel app, check me in for my flight.”

Her travel app recognized her voice instantly, confirmed her booking, and brought up a QR boarding pass on screen.

A few minutes later, at the airport’s shop, she pointed her phone camera at a pair of sunglasses. The app scanned them and instantly suggested cheaper options online, same model, same color, but at half the price.

Neither moment felt extraordinary to her. But just a few years ago, AI-Enabled Image and Voice Recognition Features in Apps like these would have sounded futuristic. Today, they’re so seamless that many users barely notice the complex AI image recognition in apps and speech technologies working in the background.

Top apps today combine computer vision, automatic speech recognition (ASR), and natural language understanding (NLU) to create interactions that feel fast, personal, and (dare we say) a little magical.

Here, we’ll understand the evolution of AI in mobile applications, unpack AI image and voice recognition: core components and technologies, and explore advanced image recognition capabilities and use cases.

Insider Intelligenc found that over a quarter about 26% of U.S. adults currently use or plan to use AI-powered voice assistants on their smartphones, making voice the most popular AI feature on mobile right now.

Mobile AI has gone through three big waves:

Apps captured audio or images and shipped them to the cloud for processing. This enabled early breakthroughs, like accurate voice transcription and object tagging, but it introduced latency and raised privacy questions.

Models got smaller and phones got faster. AI mobile App Developers began running lightweight models on-device for instant responses e.g., wake word detection, face unlock, offline translation, and falling back to the cloud for heavier tasks. This cut network costs and made features more resilient.

With NPUs integrated into mainstream chips, phones increasingly run image and voice models locally for summarization, translation, scene understanding, and multimodal search. The promise: snappier UX, stronger privacy, and smarter features that work even with a spotty signal. Analysts expect this trend to accelerate as phones ship with silicon specifically optimized for AI workloads.

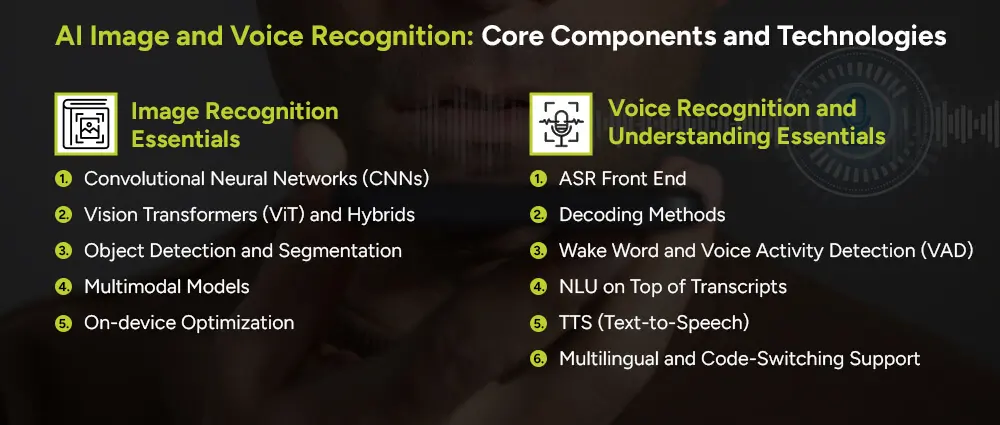

Both modalities rely on a similar foundation, representation learning, but the details differ:

The main technology for identifying images and extracting features. Examples include ResNet and EfficientNet.

Transformers break images into patches and use attention to find patterns. They often match or beat CNNs on large datasets and are easier to scale.

Models like YOLO and DETR can detect objects and draw boxes around them. Mask R-CNN and SAM go further, identifying the exact pixels for each object.

Systems like CLIP connect images and text in the same space. This enables features like visual search, like asking “find shoes like this,” and smart captions.

Techniques like quantization, pruning, and distillation make AI models smaller and faster so they can run smoothly on mobile devices.

Automatic Speech Recognition (ASR) converts audio into text. Modern systems use Conformer models for more accurate results, replacing older approaches.

CTC, Transducer, and Attention decoders help align audio with text efficiently and accurately.

Small models listen for trigger phrases like “Hey Siri” and detect when you start speaking. These usually run on-device for speed and privacy.

Natural Language Understanding (NLU) takes the text, figures out the intent, and pulls out key details to complete actions.

Turns text back into natural-sounding voices, making assistants conversational.

Essential in places where users mix languages in one sentence. Modern models handle this much better than older systems, which is why app modernization is highly essential for SMBs and large-scale businesses today.

You don’t need to build a full Siri. Start with small wins and scale.

1) Define your voice moments

Figure out where speaking is better than tapping. Examples include:

2) Decide where AI runs

3) Build the pipeline

4) UX considerations

5) Privacy and trust

Voice is already popular on mobile. In fact, 26% of U.S. adults use or plan to use AI-powered voice assistants on their smartphones (YouGov/Insider Intelligence). Designed well, voice can become the most natural and convenient way for users to interact with your app. (EMARKETER)

Image recognition has moved far beyond “is this a cat?” Here’s where teams are gaining traction:

Shoppers snap a picture and find similar items; travelers point their camera to identify landmarks. Multimodal embeddings (think CLIP-like) power “find me something like this” experiences that boost conversion and retention.

Face and hand tracking enable makeup try-ons, eyewear fitting, ring sizing, and clothes try-on in the retail and ecommerce business. Scene geometry estimation and segmentation let users preview furniture at scale in their living room.

Healthcare professionals pose estimation tracks form during workouts; dermatology apps flag lesions to discuss with a clinician; diet apps recognize packaged foods and nutrition labels.

From a market standpoint, computer vision is one of AI’s fastest-moving domains. Analysts track rapid growth as industries adopt vision for automation and edge use cases.

Recognition is just the beginning. The real value comes when your app adapts to users in the moment. Context-aware UIs can detect a document and switch to “Scan” mode, or change language settings if the user speaks in Urdu. Apps can remember frequent actions and suggest them, summarize bursts of photos or voice notes, and connect user-captured images to relevant catalog items. The key is to personalize in a helpful, transparent way while giving users control.

Architecture choices: where AI runs

A mix of on-device and cloud processing. For example, detect wake words locally, then send complex or uncertain requests to the cloud. Sensitive tasks can be checked in both places.

With more GenAI-capable smartphones and better NPUs, more AI features can run locally each year, improving speed, privacy, and user trust.

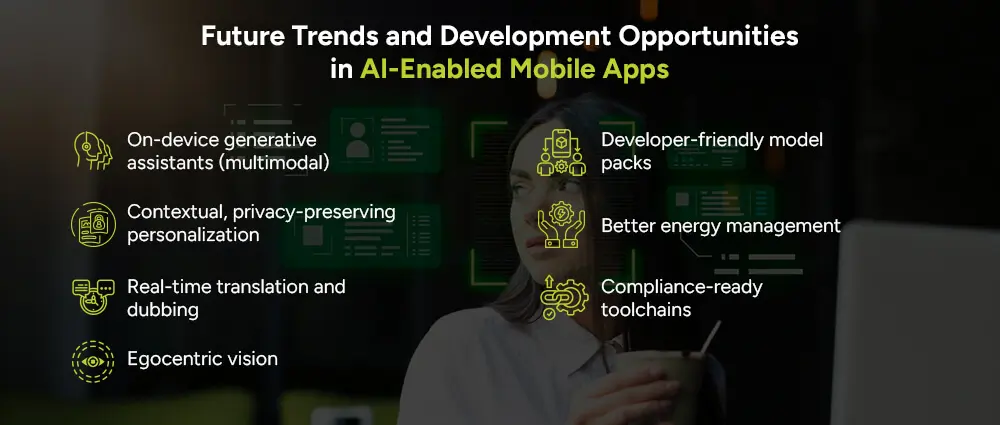

Soon, you’ll be able to select a photo album and ask your phone to create a story with highlights, all without using the cloud. With more powerful NPUs, this will be possible for everyday users.

App frameworks will adapt to your needs while keeping your data on your device, thanks to technologies like federated learning and differential privacy.

On-device speech-to-speech translation with voice cloning (with consent) will make conversations in different languages smooth and natural.

From phone cameras to wearables, apps will understand what you see and do, enabling training, repair, and accessibility tools.

App stores and device makers will offer pre-built AI model bundles, making it easier for developers to add vision, voice, and other capabilities.

AIaaS tasks will be scheduled smartly to save battery, running heavy processing when plugged in and scaling back when needed.

Built-in tools for consent, audit logs, and data handling will help developers meet privacy and compliance standards. Therefore, whether you’re in the fintech, banking, or healthcare sector, you’ll always be goodwith compliance on all ends.

AI works by learning patterns from data and applying that knowledge to new situations.

For images, AI models are trained on millions of pictures to recognize things like edges, textures, shapes, and overall context. Modern systems like Convolutional Neural Networks (CNNs) and Vision Transformers turn raw pixels into meaningful information. This allows apps to classify what an image contains, detect specific elements, separate regions of interest, and even find similar items.

For speech, AI listens to sound patterns over time and learns how they map to words, even with background noise or different accents. Advanced models such as Conformer/Transducer can recognize speech in real time, while natural language understanding (NLU) models interpret the meaning and extract details like amounts or names.

With more data and faster hardware, today’s smartphones can perform this recognition almost instantly. On-device AI is becoming standard, making the process faster, more accurate, and more private

The key to building AI-powered mobile experiences is to start small but smart. Focus on one high-value user journey, such as scanning receipts and extracting totals or enabling voice-controlled playback. Prototype using ready-made AI models or APIs and test on your target devices to ensure speed and accuracy. Decide what runs on-device and what runs in the cloud, then optimize with techniques like caching.

Build a smooth fallback for when AI is not perfect, then launch to a small group, gather feedback, and make improvements. Over time, expand with features like multilingual support, personalization, and multimodal search. Continuously test in different lighting, noise, and accent conditions while keeping privacy as a top priority.

When done well, users will feel like your app just works, delivering a fast, helpful, and secure experience. At Arpatech, we specialize in integrating AI seamlessly into mobile apps. Let us help you bring your next smart app idea to life.

AI enhances visuals by improving capture quality (clearer low-light and motion shots), automating tasks (detecting documents, fixing perspective, running OCR), personalizing content (curated galleries, smart edits), and boosting accessibility (scene descriptions, object recognition). It also makes AR more realistic with accurate depth and segmentation.

Image: Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs) for classification, detection, and segmentation.

Speech: Conformer-Transducer for streaming recognition, paired with transformer-based models for understanding context.